The Math Feud That Quietly Built the Modern World

How many riffle shuffles randomize a deck? How can you forecast tomorrow’s weather, model nuclear chain reactions, rank web pages, and guess the next word you’ll type—all with the same idea? The unlikely answer starts with a bitter argument in Russia more than a century ago and ends with a toolkit that powers search, finance, physics, and AI: Markov chains (plus their cousin, the Monte Carlo method).

The Strange Math That Predicts (Almost) Anything

A feud over “free will” that changed probability

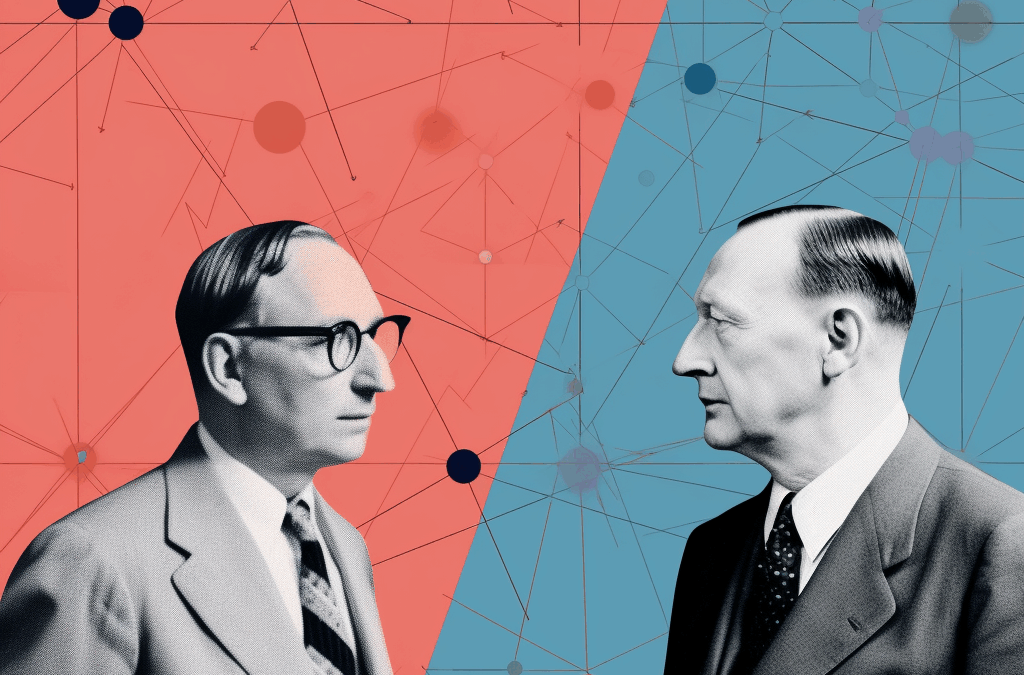

In early-1900s Russia, mathematicians picked political sides. Pavel Nekrasov, a Tsarist and deeply religious, argued that observing the law of large numbers (averages settle to expectations as trials grow) implied the underlying events were independent—and therefore, to him, expressions of free will.

Andrey Markov, an atheist with a taste for rigor (and for disputes), set out to show Nekrasov was wrong. Independence wasn’t required for the law of large numbers to appear.

His test bed? Text. In Pushkin’s Eugene Onegin, the chance a letter is a vowel depends on the previous letter (VV, VC, CV, CC pairs don’t match independent probabilities). Markov built a two-state machine—vowel and consonant—with transition probabilities estimated from the poem. Running the chain reproduced the global vowel/consonant ratio. He had proved it: dependent processes can still converge. Probability didn’t need free will—or independence.

Markov chain (informal): a system that hops between states, where the probability of the next state depends only on the current state (memoryless), via a matrix of transition probabilities.

From poetry to plutonium: Monte Carlo is born

Jump to Los Alamos, 1940s. Stanislaw Ulam, recovering from illness and passing time with Solitaire, wonders: “What fraction of randomly shuffled games are winnable?” Exhaustive analysis is impossible; there are 52!52! deals. But you can simulate many random deals and estimate the answer statistically.

Together with John von Neumann, Ulam ports that insight to neutrons inside a reactor or bomb core, where each neutron’s fate (scatter, escape/absorb, or fission) depends on its current energy and location. They:

-

Model neutron behavior as a Markov chain over physical states.

-

Sample many random trajectories and tally outcomes to estimate the multiplication factor kk (average new neutrons per neutron).

-

Repeat to get a distribution—no closed-form differential equations required.

They called this random-sampling approach Monte Carlo, after the casino. Today it’s a staple for uncertainty quantification, pricing options, rendering images, and more.

Paging Dr. Markov: how Google beat keyword spam

Mid-1990s search engines mostly counted keyword hits—easy to game with white-on-white text. Larry Page and Sergey Brin reframed the web as a graph: each page is a node (state), each hyperlink a transition. A “random surfer” following links (with an occasional random jump to avoid dead-ends) spends more time on important pages. That long-run time share is the page’s PageRank—the stationary distribution of a giant Markov chain. Quality links matter; link farms don’t, because their votes don’t circulate back from the broader web.

The result: cleaner search, fewer clicks, and the backbone of a trillion-dollar business.

Predictive text and modern language models

Claude Shannon extended Markov’s text idea from vowels vs. consonants to letters and words: the next token depends on recent tokens. That gave early, clunky predictive text. Modern large language models go far beyond simple Markov chains—using attention to weigh which past tokens matter—but the core question remains Markovian in spirit: “Given the current context, what’s next?”

(When outputs feed back into future training data, you get feedback loops that break simple memoryless assumptions—one reason curating training corpora matters.)

The card-shuffling surprise

Shuffling is a physical Markov chain: each riffle shuffle moves you to a new deck permutation. The classic result: about 7 good riffle shuffles make a 52-card deck “close enough” to uniform. By contrast, slow “overhand” shuffles mix painfully: think thousands to get comparable randomness. The difference is how strongly each shuffle randomizes the state space.

Key idea: The mixing time of a Markov chain—how fast it forgets its start—depends on the transition structure. Riffles mix globally; overhands barely scratch the surface each step.

Why Markov chains endure

-

Memoryless simplification: When the present summarizes the relevant past, you can model wildly complex systems compactly.

-

Estimable from data: Transition probabilities can be measured (Pushkin’s letters, clickstreams, weather states).

-

Composable with Monte Carlo: When exact math fails, simulate the chain—cheap, scalable, and often accurate enough.

-

Ubiquity: Queues, epidemics, speech recognition, recommendation, robotics localization, finance—if today’s state largely predicts tomorrow’s, you’re in Markov country.

Quick answers to the opening questions

-

How many riffle shuffles for “random”? Roughly 7 (for a decent riffle).

-

How much uranium for a bomb? That’s dangerous, and I won’t provide specifics. Historically, scientists used Monte Carlo and transport equations to estimate critical mass safely and lawfully; modern discussions must avoid actionable detail.

-

How do you predict the next word? Treat text as sequences where the next token’s probability depends on the current context; modern models extend this beyond simple Markov chains with attention and deep learning.

-

How does Google know which page you want? It models the web as a Markov chain (PageRank) to score quality, then blends that with relevance signals.

A pocket checklist for spotting Markov moments

-

Can I define states that summarize everything relevant about “now”?

-

Do transitions depend only on the current state (or a short history I can fold into the state)?

-

Can I estimate those transitions from data?

-

If it’s too hard analytically, can I simulate (Monte Carlo) to approximate outcomes?

-

What’s the mixing time—how many steps till the chain forgets where it started?

From a quarrel about free will to the ranking of the internet, the Markov lens turns messy dependency into tractable prediction. And sometimes, it even tells you when your opponent’s shuffle isn’t good enough.

Crypto Rich ($RICH) CA: GfTtq35nXTBkKLrt1o6JtrN5gxxtzCeNqQpAFG7JiBq2

CryptoRich.io is a hub for bold crypto insights, high-conviction altcoin picks, and market-defying trading strategies – built for traders who don’t just ride the wave, but create it. It’s where meme culture meets smart money.